Summarizing articles is just one of the many applications of LLMs.

“It delivers state-of-the-art performance for LLM serving using NVIDIA GPUs and allows us to pass on the cost savings to our customers.” Performance comparison

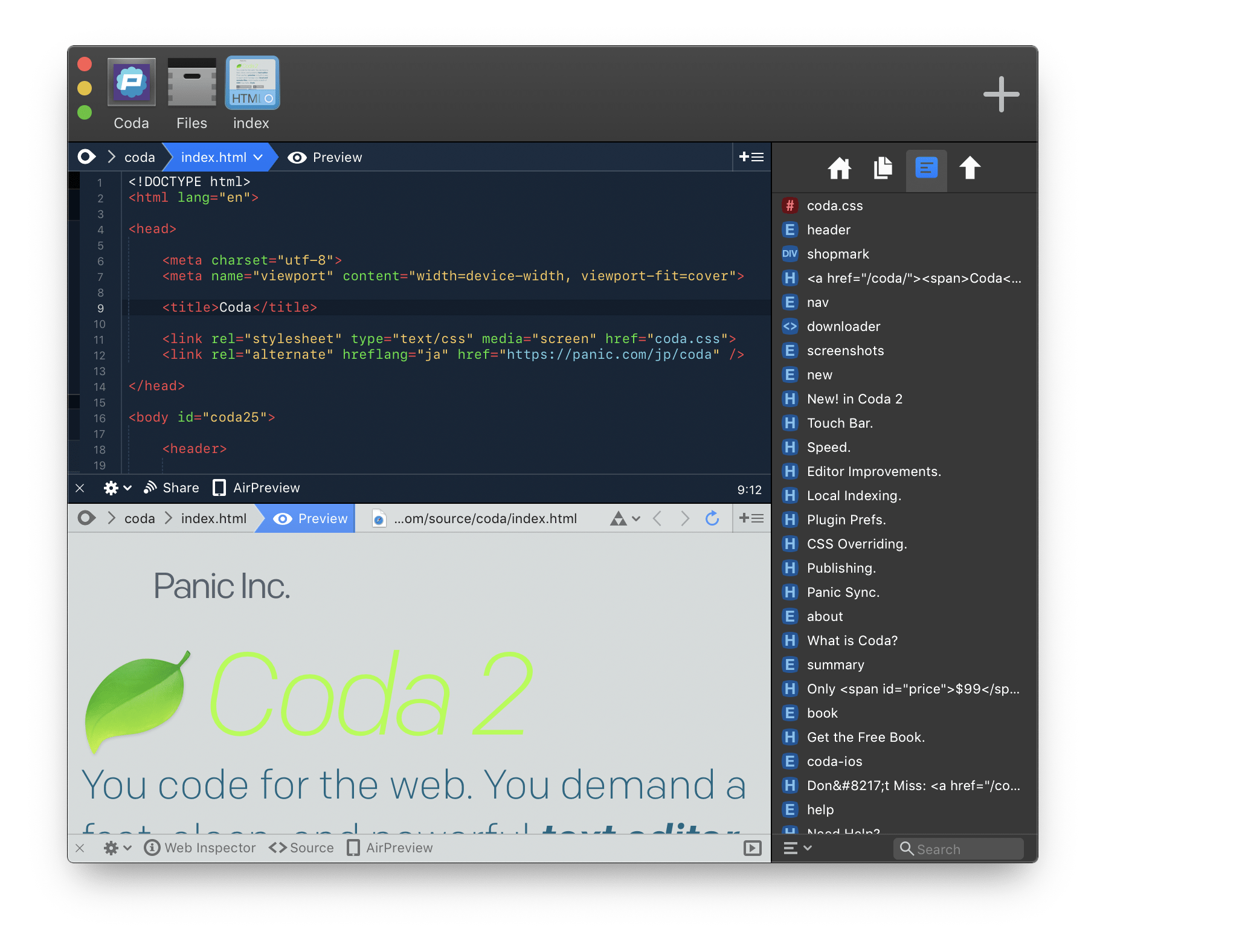

“TensorRT-LLM is easy to use, feature-packed with streaming of tokens, in-flight batching, paged-attention, quantization, and more, and is efficient,” Rao said. Naveen Rao, vice president of engineering at Databricks notes that “it has been an absolute breeze.” TensorRT-LLM improves ease of use and extensibility through an open-source modular Python API for defining, optimizing, and executing new architectures and enhancements as LLMs evolve, and can be customized easily.įor example, MosaicML has added specific features that it needs on top of TensorRT-LLM seamlessly and integrated them into their inference serving. It enables developers to experiment with new LLMs, offering peak performance and quick customization capabilities, without requiring deep knowledge of C++ or NVIDIA CUDA. TensorRT-LLM consists of the TensorRT deep learning compiler and includes optimized kernels, pre- and post-processing steps, and multi-GPU/multi-node communication primitives for groundbreaking performance on NVIDIA GPUs. Those innovations have been integrated into the open-source NVIDIA TensorRT-LLM software, available for Ampere, Lovelace, and Hopper GPUs and set for release in the coming weeks. NVIDIA has been working closely with leading companies, including Meta, Anyscale, Cohere, Deci, Grammarly, Mistral AI, MosaicML, now a part of Databricks, OctoML, Tabnine, and Together AI, to accelerate and optimize LLM inference. But their large size and unique execution characteristics can make them difficult to use in cost-effective ways. Large language models offer incredible new capabilities, expanding the frontier of what is possible with AI.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed